Quantized Local LLMs: 4-bit vs 8-bit Performance Analysis

Compare 4-bit vs 8-bit quantization for local LLMs. See quality benchmarks, speed improvements, and VRAM savings to choose the right quantization for...

Compare 4-bit vs 8-bit quantization for local LLMs. See quality benchmarks, speed improvements, and VRAM savings to choose the right quantization for...

Learn GitLab CI/CD for React: set up automated testing, building, and deployment to GitLab Pages. Complete guide with real examples and practical tips...

Should you use AI for automation? Learn when AI agents add value vs. traditional automation, common mistakes small teams make, and a practical decisio...

ByteDance's Seedance 2.0 brings 20-second AI video generation with realistic physics and improved prompt adherence. Learn what's new, how to access th...

Compare Seedance 2.0 and Veo 3.1 across speed, realism, cinematic motion, audio, formats, and creative control—so you can pick the right AI video mode...

Read Best Crypto Payments Gateways in 2026 and learn Web with SitePoint. Our web development and design tutorials, courses, and books will teach you H...

Discover the best payment gateways in France for 2026. Explore top payment methods and solutions to choose the best online payment service for your bu...

Best Payment Gateway for Subscriptions & Recurring Payment: 2026. Find the best payment gateway for your subscription business in 2026! Process re...

We ran 100 real-world coding tasks through Claude Code and Cursor to measure tokens per second, code accuracy, and total cost per task. Here's the dat...

Ollama is perfect for local development, but when your team grows past 3 concurrent users, performance drops dramatically. This guide shows you exactl...

Build your own private Copilot alternative that runs entirely locally. Zero subscription fees, complete privacy, and surprisingly good code completion...

Understanding model quantization is crucial for running LLMs locally. We break down the math, trade-offs, and help you choose the right format for you...

Apple's unified memory meets NVIDIA's dedicated VRAM. We benchmark both for local LLM running to help you choose the right hardware. Continue reading...

Build a question-answering system over your own documents using local models. Keep your data private while leveraging AI for knowledge retrieval. Cont...

Stop buying GPUs for everyone. Here's how to set up a shared local AI infrastructure that serves your entire engineering team from a single workstatio...

We benchmark three leading open-source coding models on local hardware to determine the best choice for developer productivity. Continue reading MiniM...

Set up an automated code review system using local LLMs. Catch bugs, security issues, and style violations before they reach production. Continue read...

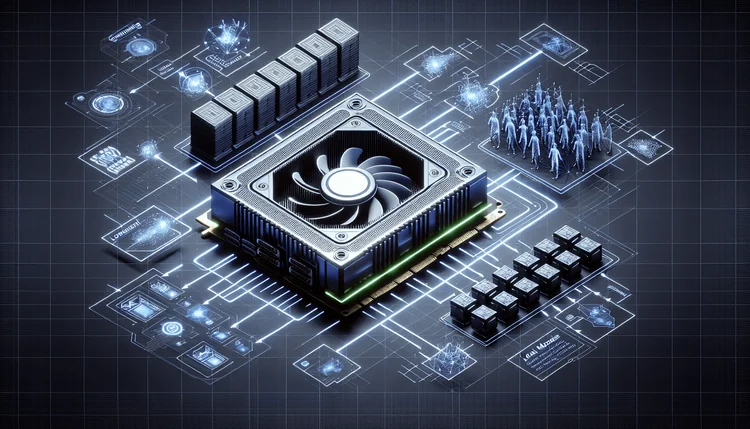

Learn how to efficiently run multiple LLM models simultaneously on a single GPU through proper memory management and model orchestration. Continue rea...

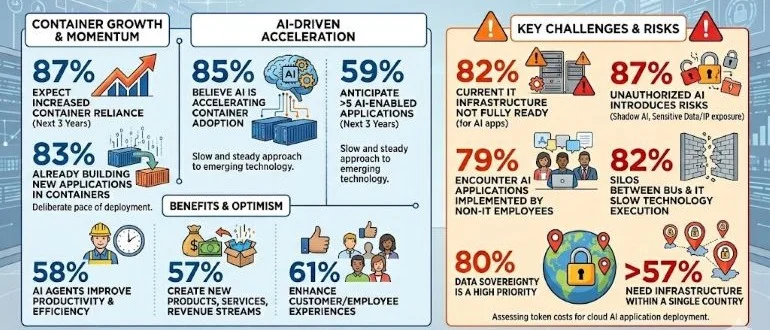

A global survey of 1,600 cloud, IT, and engineering executives published today finds a full 87% expect their organizations’ reliance on containe...

Explore how an artifact access plane can improve Kubernetes platform performance, scalability, and security by standardizing how artifacts are governe...