Best Buy is STILL selling the Galaxy S26 Ultra for just $399 with trade-in — plus get a $100 gift card, just for kicks

The preorder period may have come to a close, but you can still take advantage of Best Buy's impressive Galaxy S26 Ultra deal.

The preorder period may have come to a close, but you can still take advantage of Best Buy's impressive Galaxy S26 Ultra deal.

The Galaxy S26 lineup has finally hit store shelves, and I'm gathering all of the best deals into this guide.

Learn how to efficiently run multiple LLM models simultaneously on a single GPU through proper memory management and model orchestration. Continue rea...

We benchmark three leading open-source coding models on local hardware to determine the best choice for developer productivity. Continue reading MiniM...

Stop buying GPUs for everyone. Here's how to set up a shared local AI infrastructure that serves your entire engineering team from a single workstatio...

Build a question-answering system over your own documents using local models. Keep your data private while leveraging AI for knowledge retrieval. Cont...

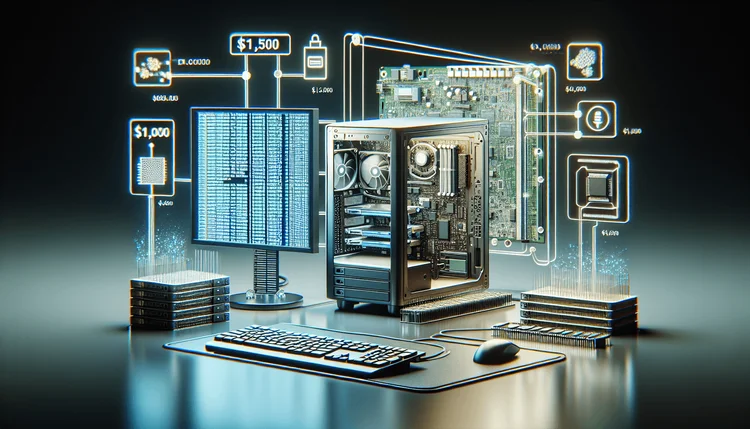

Running a reasoning model locally doesn't require a $10,000 workstation. Here's how to build a capable DeepSeek-R1 setup on a budget. Continue reading...

Understanding model quantization is crucial for running LLMs locally. We break down the math, trade-offs, and help you choose the right format for you...

Build your own private Copilot alternative that runs entirely locally. Zero subscription fees, complete privacy, and surprisingly good code completion...

T-Mobile, Verizon, and AT&T are all running deals that could get you a free Galaxy S26 Ultra — you just need to meet the eligibility requirements.

Kubernetes is the OS for modern apps — but enterprises need platforms, not just clusters. Focus on standardized paved paths, supply‑chain security (si...

Compare 4-bit vs 8-bit quantization for local LLMs. See quality benchmarks, speed improvements, and VRAM savings to choose the right quantization for...

Compare Mac and PC hardware for running local LLMs. See M3 Pro/Max vs RTX 4090/3090 benchmarks, unified memory vs VRAM, and recommendations for every...

Calculate the true cost of self-hosted LLMs vs OpenAI, Anthropic, and other cloud APIs. Includes hardware, electricity, maintenance, and hidden costs...

Master vLLM production deployment with Docker, Kubernetes, and monitoring. Learn PagedAttention optimization, multi-GPU setup, and OpenAI-compatible A...

Deploy DeepSeek R1 locally with our step-by-step guide. Learn hardware requirements, Ollama and vLLM setup, quantization options, and performance opti...

Claude Code works best when properly integrated into your existing workflow. Here's the complete setup guide for VS Code including extensions, keybind...

Running LLMs locally for coding is now viable. We measured latency, token throughput, and privacy tradeoffs between local Ollama/CodeLlama setups and...

Three AI-native IDEs, three different philosophies. We compare Cursor's agentic approach, Claude Code's context awareness, and Cody's codebase intelli...

Reports of a "Great British Firewall" are exaggerated. And even if they wanted to, here's why it would be virtually impossible.