The Complete Developer's Guide to Vibe Coding: From Skeptic to 10x Engineer

Comprehensive guide covering The Complete Developer's Guide to Vibe Coding: From Skeptic to 10x Engineer with practical implementation details. Contin...

Comprehensive guide covering The Complete Developer's Guide to Vibe Coding: From Skeptic to 10x Engineer with practical implementation details. Contin...

A tactical tutorial focused solely on the `anthropics/claude-code` tool. **Key Takeaways:** - Setting up Claude Code for maximum context awareness. -...

A technical look at `moeru-ai/airi`, focusing on the 'personality' and 'soul' aspect of coding companions. **Focus:** - Why personality matters in lon...

Comprehensive guide covering State Management for Long-Running Agents: Redis vs. Postgres with practical implementation details. Continue reading Stat...

Comprehensive guide covering Optimizing Token Usage: Context Compression Techniques with practical implementation details. Continue reading Optimizing...

Comprehensive guide covering The End of localhost? Why Remote Dev Environments are Winning with practical implementation details. Continue reading The...

Pulse update on the surprising partnership between Motorola and GrapheneOS. What this means for enterprise security and the de-Googled mobile market....

Three AI-native IDEs, three different philosophies. We compare Cursor's agentic approach, Claude Code's context awareness, and Cody's codebase intelli...

Running LLMs locally for coding is now viable. We measured latency, token throughput, and privacy tradeoffs between local Ollama/CodeLlama setups and...

Claude Code works best when properly integrated into your existing workflow. Here's the complete setup guide for VS Code including extensions, keybind...

Deploy DeepSeek R1 locally with our step-by-step guide. Learn hardware requirements, Ollama and vLLM setup, quantization options, and performance opti...

Master vLLM production deployment with Docker, Kubernetes, and monitoring. Learn PagedAttention optimization, multi-GPU setup, and OpenAI-compatible A...

Calculate the true cost of self-hosted LLMs vs OpenAI, Anthropic, and other cloud APIs. Includes hardware, electricity, maintenance, and hidden costs...

Compare Mac and PC hardware for running local LLMs. See M3 Pro/Max vs RTX 4090/3090 benchmarks, unified memory vs VRAM, and recommendations for every...

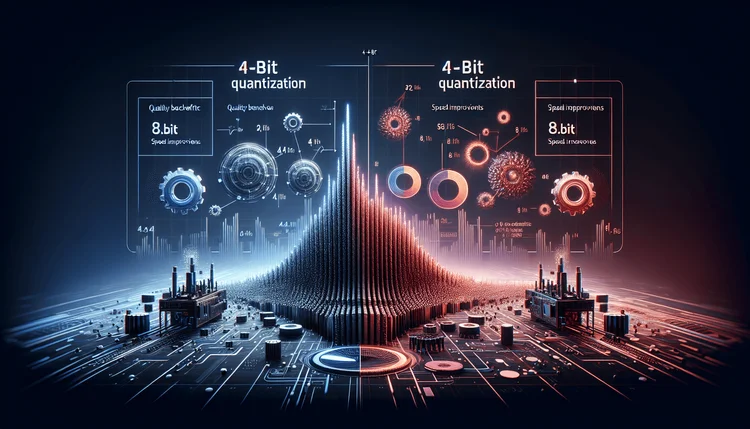

Compare 4-bit vs 8-bit quantization for local LLMs. See quality benchmarks, speed improvements, and VRAM savings to choose the right quantization for...

Build your own private Copilot alternative that runs entirely locally. Zero subscription fees, complete privacy, and surprisingly good code completion...

Understanding model quantization is crucial for running LLMs locally. We break down the math, trade-offs, and help you choose the right format for you...

Running a reasoning model locally doesn't require a $10,000 workstation. Here's how to build a capable DeepSeek-R1 setup on a budget. Continue reading...

Build a question-answering system over your own documents using local models. Keep your data private while leveraging AI for knowledge retrieval. Cont...

Stop buying GPUs for everyone. Here's how to set up a shared local AI infrastructure that serves your entire engineering team from a single workstatio...